. Physical or virtual system, or an instance running on a public or private IaaS.

Base OS: Fedora 21, CentOS 7.4, with the 'Minimal' installation option and the latest packages from the Extras channel, or 7.4.5 or later. Minimum 4 vCPU (additional are strongly recommended).

CentOS 7: Install bind and run DNS server for private network This article will describe running DNS server for private network. This DNS server does not use recursion query for outside of private network.

Minimum 16 GB RAM (additional memory is strongly recommended, especially if etcd is co-located on masters). Minimum 40 GB hard disk space for the file system containing /var/. Minimum 1 GB hard disk space for the file system containing /usr/local/bin/. Minimum 1 GB hard disk space for the file system containing the system’s temporary directory. Masters with a co-located etcd require a minimum of 4 cores.

2 core systems will not work. Physical or virtual system, or an instance running on a public or private IaaS. Base OS: Fedora 21, CentOS 7.4, with 'Minimal' installation option, or 7.4.5 or later. NetworkManager 1.0 or later. Minimum 8 GB RAM.

Minimum 15 GB hard disk space for the file system containing /var/. Minimum 1 GB hard disk space for the file system containing /usr/local/bin/. Minimum 1 GB hard disk space for the file system containing the system’s temporary directory.

An additional minimum 15 GB unallocated space per system running containers for Docker’s storage back end; see. Additional space might be required, depending on the size and number of containers that run on the node. External etcd Nodes. You must configure storage for each system that runs a container daemon.

For containerized installations, you need storage on masters. Also, by default, the web console is run in containers on masters, and storage is needed on masters to run the web console. Containers are run on nodes, so storage is always required on the nodes. The size of storage depends on workload, number of containers, the size of the containers being run, and the containers' storage requirements. Containerized etcd also needs container storage configured. Storage management Table 1. The main directories to which OKD components write data Directory Notes Sizing Expected Growth /var/lib/openshift Used for etcd storage only when in single master mode and etcd is embedded in the atomic-openshift-master process.

Less than 10GB. Will grow slowly with the environment. Only storing metadata.

/var/lib/etcd Used for etcd storage when in Multi-Master mode or when etcd is made standalone by an administrator. Less than 20 GB.

Will grow slowly with the environment. Only storing metadata. /var/lib/docker When the run time is docker, this is the mount point. Storage used for active container runtimes (including pods) and storage of local images (not used for registry storage).

Mount point should be managed by docker-storage rather than manually. 50 GB for a Node with 16 GB memory.

Additional 20-25 GB for every additional 8 GB of memory. Growth is limited by the capacity for running containers. /var/lib/containers When the run time is CRI-O, this is the mount point. Storage used for active container runtimes (including pods) and storage of local images (not used for registry storage). 50 GB for a Node with 16 GB memory.

Additional 20-25 GB for every additional 8 GB of memory. Growth limited by capacity for running containers /var/lib/origin/openshift.local.volumes Ephemeral volume storage for pods. This includes anything external that is mounted into a container at runtime. Includes environment variables, kube secrets, and data volumes not backed by persistent storage PVs. Varies Minimal if pods requiring storage are using persistent volumes. If using ephemeral storage, this can grow quickly. /var/log Log files for all components.

Log files can grow quickly; size can be managed by growing disks or managed using log rotate. # This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=enforcing # SELINUXTYPE= can take one of these three values: # targeted - Targeted processes are protected, # minimum - Modification of targeted policy.

Only selected processes are protected. # mls - Multi Level Security protection.

OKD runs containers on hosts in the cluster, and in some cases, such as build operations and the registry service, it does so using privileged containers. Furthermore, those containers access the hosts' Docker daemon and perform docker build and docker push operations. As such, cluster administrators should be aware of the inherent security risks associated with performing docker run operations on arbitrary images as they effectively have root access.

This is particularly relevant for docker build operations. Ensure the following ports required by OKD are open on your network and configured to allow access between hosts. Some ports are optional depending on your configuration and usage. Node to Node 4789 UDP Required for SDN communication between pods on separate hosts. Nodes to Master 53 or 8053 TCP/UDP Required for DNS resolution of cluster services (SkyDNS). Installations prior to 1.2 or environments upgraded to 1.2 use port 53. New installations will use 8053 by default so that dnsmasq may be configured.

4789 UDP Required for SDN communication between pods on separate hosts. 443 or 8443 TCP Required for node hosts to communicate to the master API, for the node hosts to post back status, to receive tasks, and so on. Master to Node 4789 UDP Required for SDN communication between pods on separate hosts. 10250 TCP The master proxies to node hosts via the Kubelet for oc commands. 10010 TCP If using CRI-O, open this port to allow oc exec and oc rsh operations. Master to Master 53 or 8053 TCP/UDP Required for DNS resolution of cluster services (SkyDNS). Installations prior to 1.2 or environments upgraded to 1.2 use port 53.

New installations will use 8053 by default so that dnsmasq may be configured. 2049 TCP/UDP Required when provisioning an NFS host as part of the installer. 2379 TCP Used for standalone etcd (clustered) to accept changes in state. 2380 TCP etcd requires this port be open between masters for leader election and peering connections when using standalone etcd (clustered). 4789 UDP Required for SDN communication between pods on separate hosts.

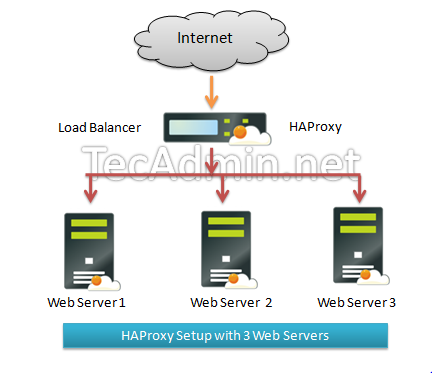

External to Load Balancer 9000 TCP If you choose the native HA method, optional to allow access to the HAProxy statistics page. External to Master 443 or 8443 TCP Required for node hosts to communicate to the master API, for node hosts to post back status, to receive tasks, and so on. 8444 TCP Port that the controller service listens on.

Required to be open for the /metrics and /healthz endpoints. IaaS Deployments 22 TCP Required for SSH by the installer or system administrator. 53 or 8053 TCP/UDP Required for DNS resolution of cluster services (SkyDNS). Installations prior to 1.2 or environments upgraded to 1.2 use port 53. New installations will use 8053 by default so that dnsmasq may be configured. Only required to be internally open on master hosts. 80 or 443 TCP For HTTP/HTTPS use for the router.

Required to be externally open on node hosts, especially on nodes running the router. 1936 TCP ( Optional) Required to be open when running the template router to access statistics.

Can be open externally or internally to connections depending on if you want the statistics to be expressed publicly. Can require extra configuration to open. See the Notes section below for more information. 2379 and 2380 TCP For standalone etcd use.

Only required to be internally open on the master host. 2379 is for server-client connections. 2380 is for server-server connections, and is only required if you have clustered etcd. 4789 UDP For VxLAN use (OpenShift SDN).

Required only internally on node hosts. 8443 TCP For use by the OKD web console, shared with the API server.

10250 TCP For use by the Kubelet. Required to be externally open on nodes. In the above examples, port 4789 is used for User Datagram Protocol (UDP).

When deployments are using the SDN, the pod network is accessed via a service proxy, unless it is accessing the registry from the same node the registry is deployed on. OKD internal DNS cannot be received over SDN.

For non-cloud deployments, this will default to the IP address associated with the default route on the master host. For cloud deployments, it will default to the IP address associated with the first internal interface as defined by the cloud metadata. The master host uses port 10250 to reach the nodes and does not go over SDN. It depends on the target host of the deployment and uses the computed value of openshiftpublichostname. Port 1936 can still be inaccessible due to your iptables rules. Use the following to configure iptables to open port 1936.

# iptables -A OSFIREWALLALLOW -p tcp -m state -state NEW -m tcp -dport 1936 -j ACCEPT Table 9. Aggregated Logging 9200 TCP For Elasticsearch API use. Required to be internally open on any infrastructure nodes so Kibana is able to retrieve logs for display. It can be externally opened for direct access to Elasticsearch by means of a route. The route can be created using oc expose.

9300 TCP For Elasticsearch inter-cluster use. Required to be internally open on any infrastructure node so the members of the Elasticsearch cluster may communicate with each other.